The 0.04%

The architects building the technology stack you already live inside.

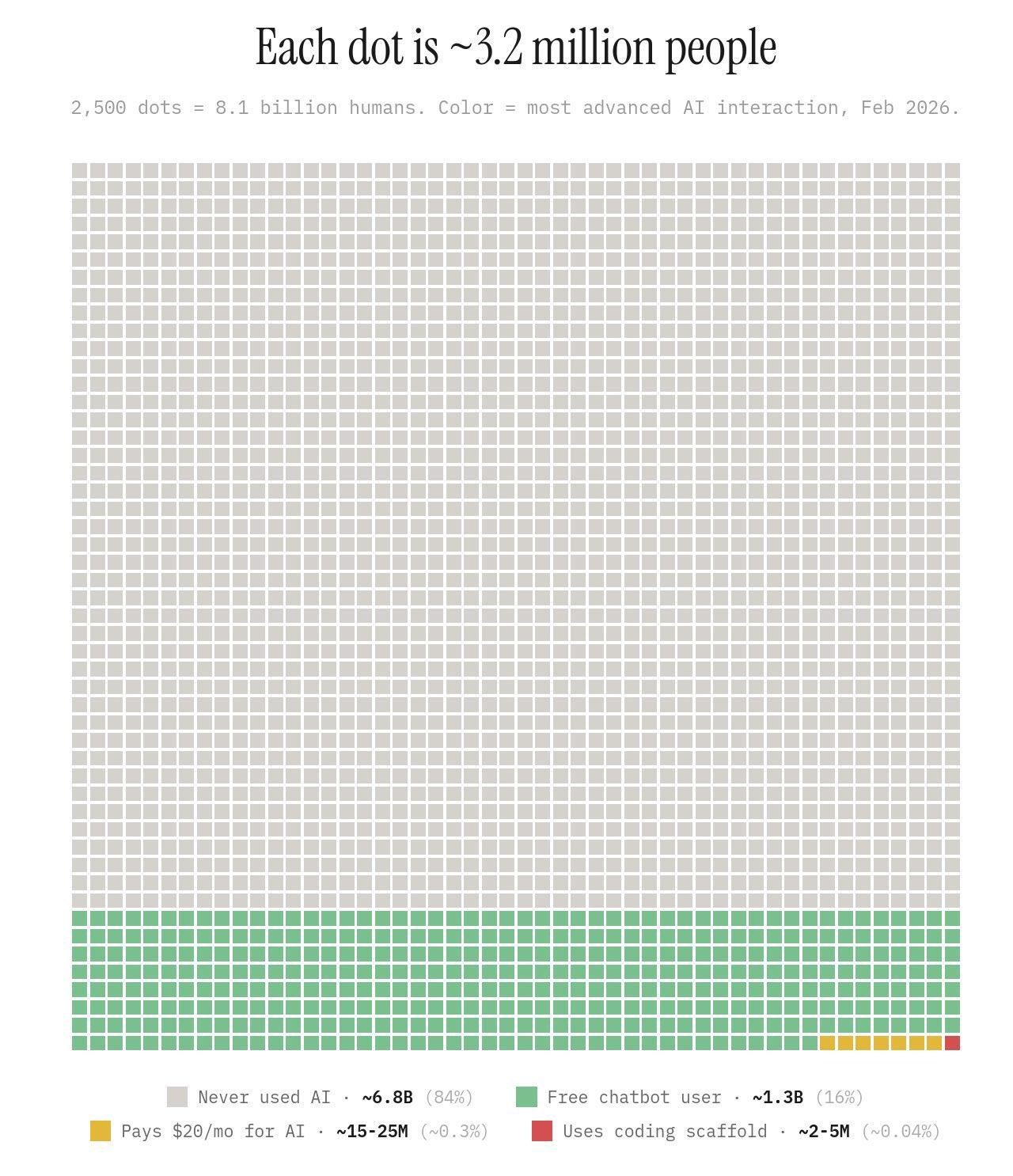

A chart by Damian Player has been circulating this week. Not a McKinsey quadrant or a Gartner hype cycle. Just 2,500 dots on a grid. Each dot represents 3.2 million people. Together, they account for every human being alive: 8.1 billion of us. The dots are colour coded by their most advanced AI interaction as of February 2026.

Grey for people who have never used AI. Green for free chatbot users. A thin band of orange for people paying twenty dollars a month. And a barely visible sliver of red for those using AI coding tools to build systems.

The grey takes up nearly the entire image. Row after row after row. Eighty four percent of the world. 6.8 billion people who have never opened ChatGPT, never asked Claude a question, never prompted Gemini for a summary.

At the bottom, a strip of green. Sixteen percent. Roughly 1.3 billion people who have used a free chatbot at least once.

Then the orange. A few dots. Fifteen to twenty five million people. 0.3 percent.

Then the red. You could almost miss it. Two to five million. 0.04 percent of humanity.

Most people sharing this chart are reading it as a story about adoption. About how early we still are. About the distance between the AI conversation and the AI reality.

That reading is correct. It is also the least interesting thing the chart has to say.

The thing adoption metrics miss

We have been trained to think about technology through the lens of consumer adoption. The smartphone curve. The social media S curve. The internet penetration chart. In each case, the story was the same: a technology starts with a few million users, crosses a tipping point, and reaches billions. Adoption equals impact.

AI does not work this way.

The 6.8 billion people who have never typed a prompt are not untouched by artificial intelligence. Their insurance premiums are already being priced by it. Their CVs are being filtered by it. Their mortgage applications are being scored by it. Their medical images are being read by it. The news they see, the prices they pay, the routes they drive, the jobs they are offered or denied: all of these are increasingly shaped by systems they have never seen, never used, and never consented to.

“Adoption” implies a choice. What is actually happening is deployment.

And deployment does not require the participation of the people it affects.

I work with organisations building and deploying these systems. I sit in rooms where executives are embedding AI into processes that will affect thousands of customers, and those customers will never know. Not because anyone is hiding it. Because the nature of infrastructure is that the people inside it do not see it. The chart does not show an adoption gap. It shows a visibility gap. It asks us to notice the difference between who uses AI and who AI is being used on.

The architecture of invisible power

Every major system that governs daily life was designed by a small number of people and experienced by everyone else. Tax codes. Urban planning. Supply chain logistics. Financial instruments. Insurance actuarial tables. Credit scoring models. You did not adopt any of these systems. They adopted you. You were born into them or they were deployed around you, and by the time you encountered them they were simply how things worked.

The people who designed those systems were never a majority. They were not even a meaningful minority. A few hundred urban planners shaped the cities that billions inhabit. A few dozen economists designed the monetary frameworks that determine the purchasing power of your salary. A small committee at the Basel Accords defined how banks around the world assess risk, which determines whether you can get a loan, which determines whether you can buy a house.

Nobody waited for universal adoption of financial regulation before it changed everyone’s life.

AI is following the same pattern. Faster. Broader. With fewer of the slow institutional checks that gave previous infrastructure time to be understood, debated, and shaped by the people living inside it. A tax code takes years to draft and passes through legislative scrutiny. An AI model can be deployed to millions overnight. The power dynamic is the same. The velocity is new.

The 0.04 percent are not early adopters

Look at the red dots again. Two to five million people using AI coding tools and scaffolds. Cursor. GitHub Copilot at depth. Claude Code. Agentic development environments.

The technology industry calls these people “early adopters” as if they are the first customers of a new product category, ahead of the curve but on the same curve as everyone else. That framing is wrong. These people are not waiting for the rest of the world to catch up. They are building the systems the rest of the world will live inside.

I work alongside people in this group. What strikes me is not their technical skill but their architectural influence. They are not writing code for the sake of code. They are making design decisions about how intelligence flows through an organisation, how decisions get made, what gets automated and what stays human. Those decisions will compound. They will become the defaults that millions of people encounter without ever knowing a choice was made.

The person who uses ChatGPT to summarise an article is an adopter. The person who builds an AI system that determines which articles get written, which get promoted, and which get buried is an architect. The person who asks an AI to help draft an email is an adopter. The person who builds the AI layer that processes, triages, and responds to a million emails before any human reads them is an architect.

The 0.04 percent are not ahead on the adoption curve. They are above it. They are building the infrastructure that will define what AI means for the other 99.96 percent.

A few thousand engineers at CERN and DARPA built the internet. A few hundred developers at Netscape and early web companies shaped what it felt like to use. By the time “adoption” reached billions, the architecture was set. The fundamental decisions about how information would flow, how attention would be monetised, how identity would work online: those were made by a vanishingly small number of people long before the average person sent their first email.

We are in that window again. And the window is smaller this time.

What the green strip actually tells us

The 1.3 billion free chatbot users in that green band are the most misunderstood cohort on the chart.

They are not in the early stages of a journey that ends with them building AI systems. Most of them are not on that journey at all. They are experiencing a consumer interface to a technology whose real implications are happening elsewhere. They are tourists in someone else’s architecture.

The relationship between a person asking ChatGPT for recipe ideas and the AI systems reshaping global labour markets is roughly equivalent to the relationship between someone browsing Facebook in 2010 and the surveillance advertising infrastructure being built beneath their feed. Same technology. Entirely different planes of engagement. Entirely different levels of agency.

The orange strip tells a similar story from a different angle. Fifteen to twenty five million people paying for AI subscriptions. A conversion rate of roughly five percent. OpenAI has 35 million paying users across its products. But 900 million people use ChatGPT weekly. The vast majority use it casually, occasionally, for tasks they could have done another way. They are not building competence. They are renting convenience.

This is where the chart matters for anyone running a business or leading a team. The competitive advantage of AI is not measured by who has a subscription. It is measured by who has changed how they think, how they build, and how they make decisions because of it. That population is far smaller than any adoption metric suggests.

The myth of the trickle down

The democratisation thesis goes something like this: the tools will get easier, the interfaces will get simpler, the cost will come down, and eventually everyone will be empowered by AI. It contains a grain of truth wrapped in a much larger assumption.

The assumption is that the value of AI lives primarily in individual use. That the transformation is about each person having a smarter assistant. That the future is ChatGPT for everyone.

But look at how previous infrastructure technologies actually played out. The internet did not democratise power. It concentrated it. The ability to publish was democratised. The ability to reach an audience, to monetise attention, to shape information flows: that was concentrated into a handful of platforms. The tools were available to everyone. The leverage was captured by very few.

Social media repeated the pattern. Everyone got a voice. A few companies got the microphone, the amplifier, and the advertising revenue.

AI is following the same trajectory. The interface is democratised. You can talk to ChatGPT right now for free. But the ability to build AI systems that operate at scale, that reshape workflows, that make decisions affecting millions of people: that ability sits with the 0.04 percent. And the gap between using AI and building with AI is not shrinking. It is widening.

More powerful tools in the hands of a small number of architects do not automatically distribute power more broadly. They can just as easily concentrate it. I would argue they already are.

Why this is a governance story, not a technology story

We have been talking about AI as a technology adoption story. Will your company adopt AI? Will your industry be disrupted? Will you learn to prompt? These are the questions that dominate boardrooms and conferences and LinkedIn feeds.

The chart reframes all of them.

The relevant question is not about adoption. It is about architecture. Who is building the systems? What values are embedded in them? Whose interests do they serve? Who gets to inspect them? Who has the power to change them?

The 84 percent are not waiting to adopt AI. They are already inside AI systems. They just do not know it. And the decisions about how those systems work are being made by a group so small it barely registers on a chart of humanity.

Previous generations of invisible infrastructure at least had the benefit of slow deployment. Financial regulation evolved over decades. Urban planning codes were debated in council chambers. Tax policy went through legislative processes that, however imperfect, at least created a surface area for public engagement.

AI has no equivalent friction. A model can be trained, deployed, and affecting millions before any regulatory body has finished writing the first draft of a framework to assess it. The architecture is being built while the discussion about what the architecture should look like has barely begun.

Every system I help design embeds choices about what gets prioritised, what gets surfaced, what gets ignored. Every AI architect working today is making equivalent choices. Multiply that across every team building intelligence systems right now and you begin to see the scale of what is being decided, quietly, by the smallest group of people on the chart.

The chart as strategic intelligence

The competitive landscape is not divided between companies that have adopted AI and companies that have not. It is divided between companies that have people operating in the orange and red zones and companies whose people are still in the grey and green. That gap is not a technology gap. It is a comprehension gap. A capability gap. An architectural gap.

The organisations that will define the next decade are not the ones with the most AI subscriptions. They are the ones with people who understand what AI makes possible at a systems level. People who can see the second and third order implications. People who can build, not just use.

You cannot close this gap with a training programme. You cannot close it by giving everyone a ChatGPT licence. You close it by having people in your organisation who operate at the architectural level, who understand how intelligence systems work and know how to position within them.

That population is 0.04 percent of the world. If you have them, you have an advantage that most organisations do not yet understand they are missing. If you do not have them, no amount of AI adoption will compensate.

The window

The chart shows a moment in time. February 2026.

The decisions being made right now by the smallest sliver of people on that chart will determine the shape of systems that billions of people will live inside for decades.

The decisions made by a small number of internet architects in the 1990s still shape how information flows today. The choices made by early social media engineers in the late 2000s still determine how public discourse works. Those windows closed. The architecture became infrastructure. And infrastructure is extraordinarily difficult to change once it is set.

The AI window is open now. The architecture is still being written. The question is not whether you will adopt AI. You are already inside it.

The question is whether you will have any hand in shaping it.

If this resonated, subscribe. The next piece unpacks what it actually means to operate at the architectural level, and why the distinction between using AI and building with AI is the most important strategic question most organisations are not yet asking.

Craig Hepburn is an AI strategist and builder, Perplexity Fellow, and former Chief Digital Officer at Art Basel and UEFA. He works across technology, business, and system design to shape how AI operates responsibly in the real world.